Neurophilosophy and A.I.

The more I get into philosophical and philosophy-adjacent discussions of current-generation “artificial intelligence” (large language models and the like), the more dismayed I am not to see any discussion of the large body of relevant work by Paul Churchland.1 Paul is not ordinarily thought of as a philosopher of A.I., but rather a philosopher of mind and of neuroscience. However, for reasons I hope to make clear in this post, Paul was one of the first philosophers to engage in detail with the predecessors of the technology behind systems like ChatGPT, and he provides quite extensive conceptual resources for beginning to address many of the ontological and epistemological questions about this type of A.I. His work can even help us think about the design of “ethical A.I.” (though perhaps not more practical ethical questions about the use of A.I.).

Paul Churchland is a naturalistic philosopher who has written widely on philosophy of science, philosophy of mind, epistemology, and philosophy of language. For most of his career, the primary naturalistic lens through which he has pursued a variety of philosophical concerns is that of the neurosciences, and from roughly 1986 on, that primarily meant engaging with, interpreting, and applying insights from the emerging science of artificial neural networks (connectionism or parallel distributed processing).2 Beginning in the mid-1980’s, Paul engaged carefully with pioneering work in artificial neural networks by, e.g., Dana Ballard, Garrison Cottrell, Geoffrey Hinton, James McClelland, Andras Pellionisz, David Rumelhart, Terrence Sejnowski, and Ronald J. Williams. Through the next three decades, Paul developed a distinctive interpretation of the cognitive activities of artificial neural networks (ANNs), understood as models of the natural neural networks (NNNs) that make up our brains, and applied that interpretation to issues in epistemology, the metaphysics of mind, the philosophy of science, and moral psychology.

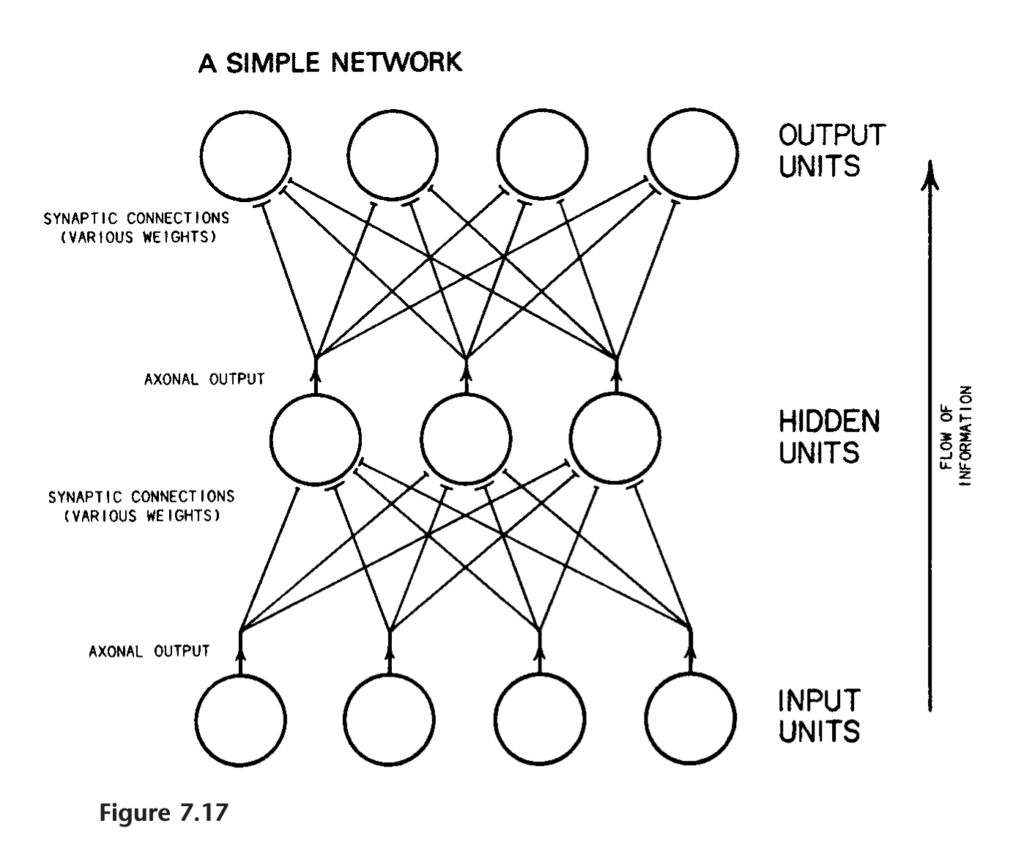

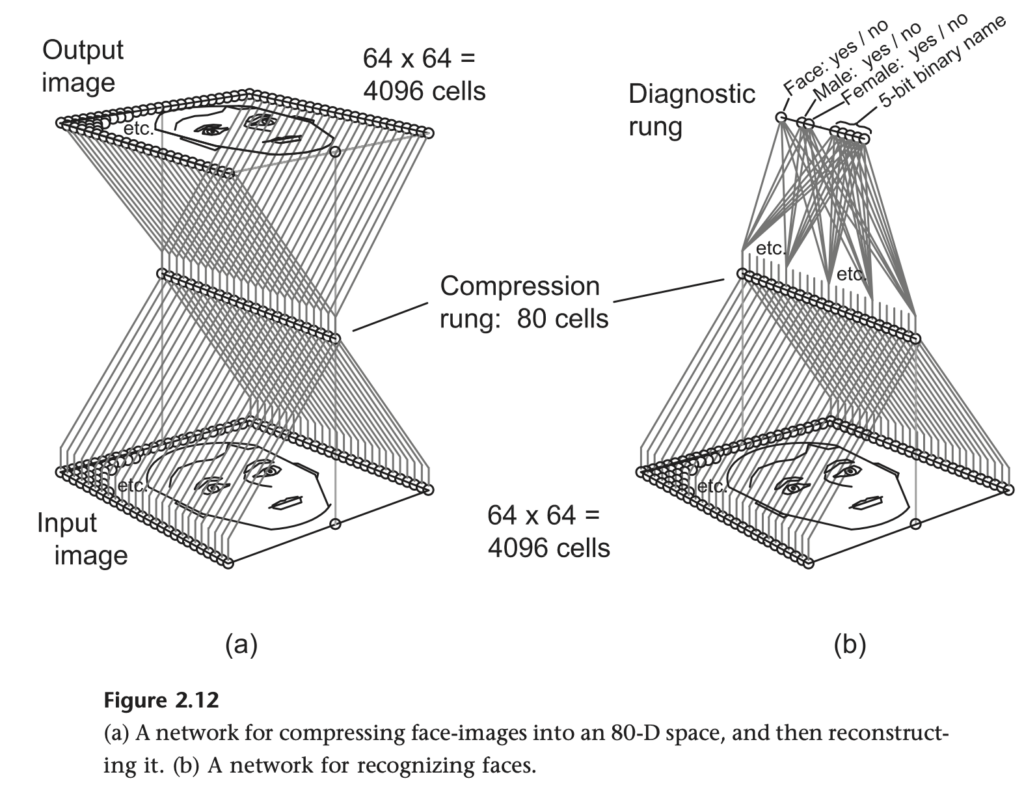

ANNs are constructed by layers of “neurons” that can have a level of activation, which might be represented with a decimal number from 0 to 1. In a densely connected network,3 each number at one layer sends its activation value to the next layer, multiplied by a synaptic weight, which might also vary from 0 to 1, or if inhibitory connections are permitted, from -1 to 1. In a simple “feed-forward” network, the activation value of a node at one layer will be a function of the activation values at the previous layer and the connection weights. In a “recurrent” neural network, some of the connections move in the opposite direction. The first layer will represent some form of input (with each node encoding, e.g., parts of the values of a bitmap of an image, or of the waveform of a sound) and the final layer will represent some form of output (with each node encoding, e.g., part of a classification symbol, or of a motor response).

Networks that start with randomly determined weights will be trained on large sets of data using a training algorithm (e.g., back-propagation, Hebbian learning) that result in a reliable pattern of responses determined by the training data set and the particular training algorithm. The activation of a given layer of the network composed of a number of neurons N can be represented as an N-dimensional vector; each neuron is an axis of the vector space. The transformations from one layer to the next can be represented as a matrix whose dimensions are given by the number of vectors in the two layers. The operation of the network can be computed by a traditional digital computer doing basic linear algebra, the math being a little more complicated for a recurrent network.

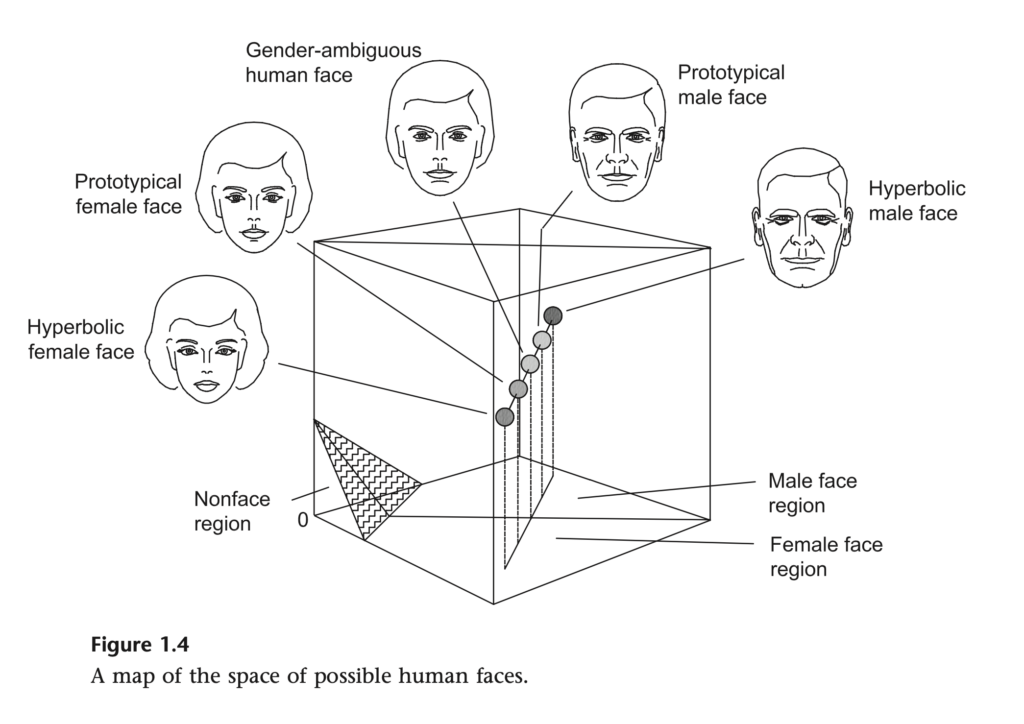

On Paul’s view, articulated in its most up-to-date form in Plato’s Camera (2012), the best way to represent what the network is doing is by thinking of it in terms of the hypergeometry of these high-dimensional vector spaces. What the synaptic weights that the network learns to represent are the relationships of similarity and difference in the input space as transformed by the preferred or expected output. The way to think of these representations is in terms of maps; these are not two-dimensional maps of a spatial territory, but rather N-dimensional maps of the abstract relations of similarity and difference in whatever phenomena the network is sensitized to. This high-dimensional map is a conceptual space. Concepts are dense regions (attractors) in this sculpted activation space. Cognition is the process of vector transformation accomplished by the connected maps of the network. Ampliative inference is the capacity of such networks to produce an accurate output even when the input is noisy or incomplete, a process known as “vector completion.” Purely feed-forward networks are quite adequate for conceptual spaces for static features, while recurrent networks are more apt for dealing with temporal (or causal) processes.

So, for example, an ANN that learns to recognize faces and classify them as male or female comes to represent the abstract space of possible human faces, with distinct regions that represent male and female faces, on a spectrum that includes hyperbolic male and female faces at the extremes, prototypically male and female faces as major attractors, and gender-ambiguous faces at the border between regions. Insofar as the faces in the training data constitute a representative sample of the population the network will later be confronted with, and insofar as male/female represents a genuine dimension of similarity-difference in the population of human faces, we can expect that this map comes to very accurately represent an aspect of the space of possible human faces (in a population) very well.4

From this starting point, Churchland provides us with quite rich accounts of cognition, learning, representation, meaning, knowledge, categorization, universals, explanation, understanding, procedural knowledge, abduction, analogical reasoning, accuracy of representation, the theory-ladenness or conceptual structuring of perception, scientific theories, theory-change, intertheoretic reduction, underdetermination, the aims of science, scientific realism, the cognitive role of (sociocultural) language, situated and extended cognition, metaethics, moral psychology, and more besides. There is plenty of room for debate about the adequacy of each of Paul’s views on these topics, but no room to doubt that in the first instance, the core ideas of the view are worked out for ANNs (for Paul, on the hypothesis that these provide our best current model for what is going on in the mind-brain of actual humans). It is worth noting, then, that whether or not you think ANNs are a good model for the human brain, the philosophical framework that Paul has articulated does apply nicely to ANNs.5 For anyone interested in how we might think about large language models (LLMs) and other contemporary ANN-based A.I., Paul Churchland’s work seems to me to represent a mandatory starting-point.

There is, of course, an obvious objection: the artificial neural networks of 2026 are not the same technology as the ANNs of 1986. The development of deep learning in 2012, generative adversarial networks in 2014, diffusion models in 2015, and the transformer architecture in 2017 were all steps that made the current generation of highly capable “generative A.I.” (GAI) possible. Recurrent networks are mostly out, replaced by transformers and convolutional networks for dealing with temporal tasks. More sophisticated forms of unsupervised and self-supervised learning are used in addition to back-propagation and Hebbian learning for many applications. All of these developments post-date Paul’s major works on the topic. How can we expect, then, to gain fresh insight from Churchland’s work that is now at least a decade and a half old?

Actually, while these developments represent quantum leaps in the technical capability of ANNs, they are mostly insignificant from a philosophical point of view. The fundamental representational capacity of LLMs and other contemporary GAI are still captured by multi-layer populations of neuronal nodes connected by synaptic weights. Generative models and transformers do some of the same work that Paul wanted to do with recurrent networks (in terms of dynamic, nonlinear, and generative processes), which makes GAI less biologically realistic, but for the purposes of Paul’s account, these differences do not seem to me significant. Hebbian learning is still at the basis of many methods of unsupervised learning. Surely, some fine details of Paul’s account will have to be modified, but if one is inclined to think that Churchland’s framework for thinking about intelligence in ANNs is adequate to the systems from the late twentieth century, then it is reasonable to think that they will apply quite well, mutatis mutandis, to LLMs and GAI broadly.

Another relevant difference worth considering is that the kinds of networks Paul typically discusses in his work have perceptual or quasi-perceptual inputs (images, sounds, colors) and perceptual, motor, or linguistic outputs. LLMs have linguistic inputs and linguistic outputs (though multimodal models are becoming increasingly central to the technology). Given his focus on ANNs as a model for brains, when Paul considers language as a part of the system, he is typically thinking about it alongside perceptual and motor modalities. But insofar as corpora of human language have structures (patterns), we should expect that LLMs will embed or comprise cognitive maps of those structures, maps that conceptualize and categorize, know and understand. Suitably capable LLMs can make inferences on the basis of those structures. And if large corpora of human language embed human knowledge about the world, we should expect that the cognitive maps of LLMs will also know something about the world. But insofar as this knowledge is had secondhand, through a medium Paul frequently critiqued for its representational infelicities, Paul also gives us a strong philosophical basis from which to critique, and potentially improve, GAI.

It is very common to see confident assertions that LLMs mimic language use but do not really understand or use it the way that we do, that LLMs do not really reason or think, that they cannot know or understand things. On examination, these claims are often grounded in a folk-psychological understanding about how we think, know, or use language, or, at best, in ideas from philosophy or cognitive psychology that are profoundly disengaged from any understanding of the underlying mechanisms of the brain. LLMs do not reason, the thinking goes, because reasoning is an ordered, linguaformal process that conforms to logical or epistemic norms; they do not know because justification must be based on underlying world-models which LLMs lack. But if Churchland is right, reasoning just is the transformation of vectors in a high-dimensional activation space that maps the relevant cognitive realm; that activation space just is the relevant world-model (map) that the LLM relies on in understanding the world.

So Paul might have it, I think. Maybe these views won’t hold up to closer scrutiny, but I hope it is clear why his is a starting point worth engaging with, if we hope to make sense of the idea that artificial neural networks like ChatGPT are “intelligent.”

Notes

- In the spirit of full disclosure, Paul was my dissertation chair. I will mostly refer to him as “Paul” throughout this piece, both because of familiarity and to distinguish his contributions to this issue from those of his spouse and frequent collaborator, Patricia Churchland. ↩︎

- This marks an important division of labor between the work of Paul and Patricia Churchland. Pat’s work has tended to focus more on neurobiology and Paul’s more on neural network models, a field at the intersection of computational neuroscience and computer science. Even Patricia Churchland’s and Terrence Sejnowski’s The Computational Brain is much more biologically-grounded than most of the work that Paul relies on and interprets. The division of labor is of course, porous, given the frequency of their collaboration and the degree to which they influence each other, but it is nonetheless a clear difference to those familiar with both of their ouvres. ↩︎

- In a sparsely connected network, the neurons at layer N will only send signals to some, but not all of the neurons at layer N+1. ↩︎

- Whether and to what extent this particular example, or the view at large, provides resources for, or a target for, feminist critique are left as an exercise for the reader. ↩︎

- Pace some small quibbles concerning Paul’s account of connectionist networks. See Lasko and Cottrell, “Churchland on Connectionism” (2006) in Paul Churchland. ↩︎

alias or “

alias or “